The Ultraviolet Catastrophe: The Glitch That Broke Classical Physics

At the end of the 19th century, physicists were feeling pretty good about themselves.

Newton had given them the laws of motion. Maxwell had unified electricity, magnetism, and light into a single elegant theory. The universe looked like a vast, reliable machine — predictable, continuous, and almost fully understood. Some physicists even believed their job was nearly finished. All that was left, they thought, was to make the measurements more precise.

They were wrong. And the thing that proved it was a glowing hot object.

Why Do Things Glow When They Get Hot?

You've seen it happen. Heat an electric stove burner and it first glows dull red, then orange, then bright yellow. Push a piece of metal in a forge and it shifts from red to blinding white. The color changes with the temperature — and physicists wanted to know exactly why.

To study this cleanly, they invented a theoretical tool called a black body: an idealized object that absorbs all incoming radiation perfectly and emits radiation purely as a function of its temperature. No reflections, no impurities — just heat and light.

Real-world black bodies: A small hole drilled into a hollow cavity is the classic example. Light entering the hole bounces around inside until it's fully absorbed. When you heat the cavity, the light leaking back out through that hole is textbook black-body radiation. Stars — including our Sun — are good natural approximations too.

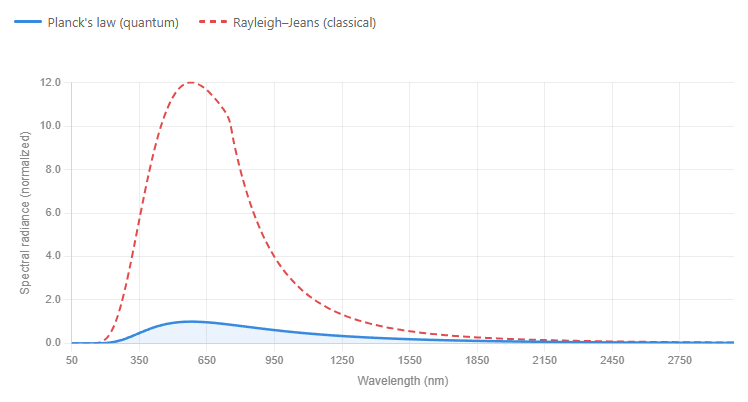

When experimenters measured and plotted the light coming off a black body, they found a smooth, bell-shaped curve. The peak shifted toward shorter wavelengths (higher frequencies) as temperature increased — explaining why hot stars look blue and cooler ones look red.

The experimental data was clean. The theoretical explanation was not.

The Classical Prediction — and Why It Was Catastrophic

Two British physicists, Lord Rayleigh and Sir James Jeans, tackled the problem using the best tools classical physics had to offer. Their reasoning was perfectly logical:

1. Waves in a box. Electromagnetic radiation inside a hot cavity behaves like standing waves — similar to the vibrations on a guitar string. Many different wavelengths can fit inside a cavity simultaneously.

2. Equipartition of energy. This foundational principle of classical thermodynamics says every possible wave (every "mode") gets an equal share of the system's thermal energy.

Here's where things went wrong.

As wavelengths get shorter, more and more waves can fit inside the cavity. There's no limit to how many short waves you can pack in — and classical theory said each one receives an equal slice of energy. Short wavelengths multiply without bound.

Energy assigned to them multiplies without bound.

The result: the theory predicted infinite energy output at short (ultraviolet) wavelengths.

In practice, this would mean your toaster should be blasting you with lethal X-rays. A warm cup of tea should be a miniature nuclear reactor. Physicist Paul Ehrenfest gave this absurd prediction its memorable name: the Ultraviolet Catastrophe.

This wasn't a rounding error. It was classical physics producing a universe-ending result from a perfectly reasonable-sounding argument.

Max Planck's Desperate Fix

In 1900, German physicist Max Planck decided to approach the problem differently. Rather than deriving a formula from first principles, he worked backward: what equation would actually match the experimental curve?

He found one. It fit the data perfectly. The catch: making it work required an assumption so strange that Planck himself called it "an act of desperation."

Classical physics assumed energy was continuous — like water, it could exist in any amount. Planck's formula only worked if energy came in fixed, indivisible chunks. He called these chunks quanta.

Specifically, he proposed that the energy E of each quantum is tied directly to its frequency ν:

E = hν

h is what we now call Planck's constant — one of the most important numbers in all of physics.

This changes everything. High-frequency (short-wavelength) waves require large quanta of energy to exist at all. At any given temperature, there simply isn't enough thermal energy available to excite those high-frequency modes. So instead of pouring infinite energy into ultraviolet waves, the system effectively shuts them off. The catastrophe disappears.

Planck had fixed the formula — but in doing so, he had quietly shattered the assumption that nature is continuous. He found it deeply unsettling, and spent years trying to walk it back. He couldn't.

The bigger picture

The Ultraviolet Catastrophe is a remarkable story because the failure of classical physics wasn't subtle. It didn't predict slightly too much energy at short wavelengths — it predicted infinite energy. The breakdown was total and undeniable.

And yet the fix didn't come from a dramatic paradigm shift or a revolutionary manifesto. It came from a methodical physicist who just wanted the math to work, reluctantly accepting that nature had to be fundamentally different from what everyone had assumed.

That assumption — that energy is continuous — had never been questioned. It seemed too obvious to doubt. The Ultraviolet Catastrophe forced physicists to ask what other "obvious" things might be wrong.

Quantum mechanics is, in many ways, still the answer to that question.